DaVinci Magihuman

Speed by Simplicity: A Single-Stream Architecture for Fast Audio-Video Generative Foundation Models.

A New Era of Generative AI

DaVinci Magihuman introduces a shift in the landscape of generative foundation models. By focusing on a single-stream Transformer architecture, this model eliminates the need for complex cross-attention layers and multi-stream processing. Instead, it treats text, video, and audio tokens as a unified sequence, allowing for high-speed processing and exceptional coordination between different data types.

The model consists of 15 billion parameters and 40 Transformer layers. This scale allows it to capture the nuances of human performance, from subtle facial expressions to natural speech patterns. The goal of the project is to provide a fast and reliable framework for creating digital human content that is both visually impressive and technically sound.

Efficiency is at the heart of DaVinci Magihuman. Traditional models often struggle with the heavy computational requirements of generating high-resolution video and audio simultaneously. Our approach simplifies this by using shared parameters for most of the generation process, significantly reducing the overhead and making real-time generation more achievable on modern hardware.

By releasing the complete model stack, including the base model, distilled versions, and the inference code, the team aims to encourage innovation in the field of audio-video generation. This open-source approach ensures that researchers and developers can build upon this foundation to create even more sophisticated applications.

Core System Highlights

A unified architecture that processes text, video, and audio via self-attention only. This design omits cross-attention and multi-stream complexity, leading to faster inference and better integration of different data modalities.

Focuses on expressive facial movements, natural speech-expression coordination, and realistic body motion. The model ensures that audio and video remain synchronized throughout the generation process.

Generates a five-second 256p video in as little as two seconds on a single H100 GPU. Higher resolutions like 1080p are handled in under 40 seconds, making it one of the fastest models in its class.

The Science of Simplification: Architecture

Sandwich Architecture Details

The Sandwich Architecture is a defining feature of the model. It uses modality-specific projections in the first and last four layers to handle the unique characteristics of text, image, and audio data. However, the middle 32 layers share parameters across all modalities. This approach allows the model to learn a unified representation of the content while still respecting the specific needs of each data type at the input and output stages.

Timestep-Free Denoising

Unlike traditional denoising models that require explicit timestep embeddings, DaVinci Magihuman infers the denoising state directly from the input latents. This removes the need for extra embeddings and simplifies the generation pipeline. The model can look at the current state of the noisy input and determine how much more work is needed to reach the final clear output, which makes the process more stable and easier to manage.

Per-Head Gating and Stability

To ensure training stability at the 15-billion parameter scale, the model uses learned scalar gates with sigmoid activation on each attention head. This allows the model to dynamically adjust the influence of different parts of the network during the generation process. This mechanism helps prevent training issues that can occur in large Transformer models and contributes to the high quality of the final video and audio.

Unified Conditioning System

Conditioning signals, such as text prompts or reference images, are handled through a minimal unified system. There are no dedicated conditioning branches, which reduces the complexity of the model and avoids the need for specialized cross-attention layers. This design choice further emphasizes the theme of speed through simplicity.

Interactive Experience

Experience the power of DaVinci Magihuman directly in your browser. Note: Performance may vary based on server load.

Quantitative Performance Benchmarks

| Model | Visual Quality (Higher is better) | Text Alignment (Higher is better) | Physical Consistency | WER (Lower is better) |

|---|---|---|---|---|

| Ovi 1.1 | 4.73 | 4.10 | 4.41 | 40.45% |

| LTX 2.3 | 4.76 | 4.12 | 4.56 | 19.23% |

| DaVinci Magihuman | 4.80 | 4.18 | 4.52 | 14.60% |

Human Evaluation Results

Our pairwise human evaluation involved 2,000 comparisons between models. DaVinci Magihuman achieved an 80.0% win rate against Ovi 1.1 and a 60.9% win rate against LTX 2.3. These results highlight the model's ability to produce content that is more engaging and visually accurate from a human perspective.

Word Error Rate (WER)

The model shows a significant reduction in Word Error Rate, scoring 14.60% compared to 40.45% for Ovi 1.1. This indicates much better synchronization between the audio generated and the facial movements on screen, leading to a much better user experience when creating digital speakers.

Inference Speed Breakdown

Generation speed is measured for a five-second video on a single Nvidia H100 GPU. The results demonstrate the efficiency of our multi-stage pipeline and specialized compiler techniques.

| Resolution | Base Generation (s) | Super-Resolution (s) | VAE Decoding (s) | Total Time (s) |

|---|---|---|---|---|

| 256p | 1.6 | — | 0.4 | 2.0 |

| 540p | 1.6 | 5.1 | 1.3 | 8.0 |

| 1080p | 1.6 | 31.0 | 5.8 | 38.4 |

Speed Optimization Techniques

- Latent-Space Super-ResolutionA two-stage pipeline that generates content at a low resolution and then refines it in latent space. This avoids the need for an extra round trip through the VAE, saving valuable time.

- Turbo VAE DecoderA lightweight, re-trained decoder designed to handle the final stage of generation much faster than standard decoders, significantly reducing the overhead of the last step.

- MagiCompiler OptimizationThe MagiCompiler fuses operators across different Transformer layers, which results in a 1.2x speedup by reducing the amount of data that needs to be moved around.

- Model Distillation (DMD-2)Distillation allows the model to generate high-quality content using only 8 denoising steps without sacrificing the detail or accuracy of the final output.

Getting Started with DaVinci Magihuman

Docker Installation (Recommended)

Using Docker ensures a consistent environment with all necessary dependencies pre-configured. This is the fastest way to get the system running.

# Pull the prebuilt image docker pull sandai/magi-human:latest # Run the container with GPU support docker run -it --gpus all --network host --ipc host \ -v /path/to/repos:/workspace \ -v /path/to/checkpoints:/models \ --name my-magi-human \ sandai/magi-human:latest \ bash # Install optimization tools git clone https://github.com/SandAI-org/MagiCompiler.git cd MagiCompiler pip install -r requirements.txt pip install .

Conda Environment Setup

If you prefer a manual setup, you can create a specialized Conda environment. This requires more steps but offers more control over the installation.

# Create and activate environment conda create -n davinci-magihuman python=3.12 conda activate davinci-magihuman conda install ffmpeg # Install main libraries pip install torch==2.10.0 torchvision==0.25.0 # Clone the repository git clone https://github.com/GAIR-NLP/daVinci-MagiHuman cd daVinci-MagiHuman pip install -r requirements.txt

Downloading Model Checkpoints

Finalize your setup by downloading the 15B-parameter model stack from our project page. You will also need external models including the text encoder (t5gemma-9b-9b-ul2) and the audio model (stable-audio-open-1.0) to complete the generation pipeline. Update your configuration files under the example directory to point to these local files before running your first test.

Advanced Usage and Prompting

DaVinci Magihuman uses an Enhanced Prompt system that translates simple user requests into highly specific performance directions. This ensures the output matches your vision with high precision.

Detailed Description

A chronological description of the character's appearance, their facial movements, vocal style, and the camera setup. This section should be concise and descriptive, providing the model with a clear visual and performance map.

Spoken Dialogue

The exact lines you want the character to speak, structured with language tags and speaker descriptions. This allows for accurate lip-sync and character-specific vocal performance in multiple supported languages.

Atmospheric Sound

Specifies the ambient noises for the scene. You can describe sounds like city traffic, quiet office hums, or natural settings, or specify a silent background if that is preferred for your content.

Sample Prompt Output

A person with professional attire stands in a neutral setting. They speak with a calm, informative tone, with subtle gestures that emphasize key points. Their facial expressions remain focused and clear...

Dialogue: "Welcome to the future of generative media. This is a demonstration of our single-stream technology."

Background Sound: "A quiet, professional interior setting."

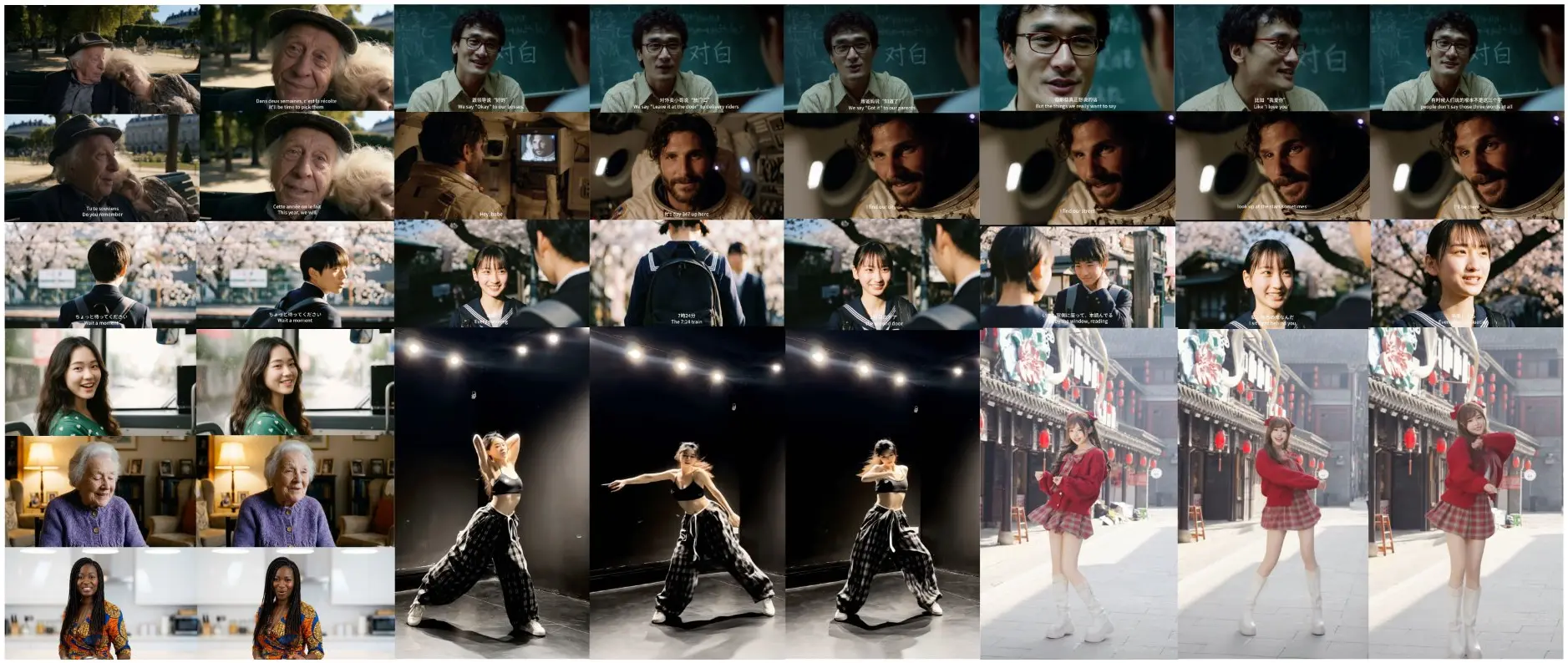

Visual Demonstrations

Watch DaVinci Magihuman in action across various scenarios, showcasing its ability to generate high-quality video and audio simultaneously with perfect synchronization.

Emotional Expression Synthesis

Demonstrating subtle nuances in facial performance and emotional transition.

High-Fidelity Lip Sync

Perfect synchronization between generated audio and mouth movements.

Natural Body Motion

Realistic human physical activity and balanced movement patterns.

Complex Gesture Control

Coordinated hand and arm movements for expressive communication.

Contextual Performance

Environment-aware human interaction and realistic spatial positioning.

Full Architecture Snapshot

High-resolution representation of the single-stream model output.

Frequently Asked Questions

Building a Unified Future Together

DaVinci Magihuman is more than just a model; it is a testament to the power of simpler architectures in solving complex generative problems. By focusing on speed, coordination, and a unified representation of data, we provide a foundation for the next generation of digital media and human interaction. Our commitment to open-source development ensures that these advancements remain accessible to the entire research community, fostering an environment of shared progress and innovation.

As we continue to refine the model and its optimization techniques, we invite developers, researchers, and creators to push the boundaries of what is possible with digital humans. If you are building sophisticated communication tools, interactive experiences, or high-fidelity media content, DaVinci Magihuman offers the performance and reliability you need to bring your vision to life. The era of fast, high-quality audio-video generation is here, and we are excited to see what the community creates with this unified single-stream foundation.